The Workshop I Built Was Wrong

More detail isn't the fix. The right layer is — and here's how I found it.

Why your AI output keeps coming back flat

Feedback and interactions were off. Not wrong, just flat.

You know the feeling. Something doesn’t feel right, but you can’t quite put your finger on it. Like making your favorite chocolate cake, following the recipe to a tee, using all your favorite ingredients, and it’s still a little off. Nobody sends it back. They still eat the cake. But it’s not the one they talked about the next day.

That’s what was happening to my writing. One week I had the perfect three layers and everyone raved. The next time, nobody said a word but they still showed up. Comments that circled around something without landing. Shares that were quieter than expected.

So I did the reasonable thing. I opened my Voice and Tone doc and started tightening it. Added more examples of what I didn’t want. Made the language more specific. Documented a few phrases that kept creeping into drafts I didn’t like.

I closed the doc feeling like I’d fixed the recipe. The next article came back the same way.

It took longer than I’d like to admit to figure out what I was actually doing. The voice wasn’t the problem. The structure was. The way I was presenting information (the flow, the sequence, the shape of an argument) wasn’t in my Voice and Tone doc. It wasn’t in any of my docs.

I had a full set of context docs. Business Snapshot. Audience Overview. Product Strategy. Storytelling Guide. All the right ingredients.

None of it answered the question my articles were actually asking.

The docs weren’t wrong. They were answering the wrong questions entirely.

Why “more context” is enterprise advice for a small business problem

The standard advice makes sense. Document your context. Give AI your voice, your audience, and your business background. The more it knows, the better it performs. Most of us have heard this, tried this, and watched it work, at least partially.

The advice isn’t wrong. It was just written for a different version of the tools and for a different scale of operation.

The frameworks most of us are following came from developers and prompting experts building large systems. Complex pipelines. Processes that needed every variable documented because the cost of getting it wrong was high. Their rules made sense for what they were doing.

When you apply that logic to a small business, it doesn’t tighten your output. It just gives you more to maintain. The docs grow, the updates pile up, and before long, you’re working on your context docs instead of the thing they were supposed to support.

I was spending more time updating my context docs than actually using them.

The goal was never to document everything. It was to remove the guesswork. Those are not the same job.

The over-documentation trap (and the template spiral that followed)

The problem wasn’t that I needed better docs. It was that I was building the wrong kind.

The original approach made sense. AI needed everything handed to it. It couldn’t hold context between sessions, couldn’t infer what you meant, and couldn’t reason about your business without explicit instruction. So you gave it everything. Voice down to specific phrases you’d never use. Audience down to what kept them up at 3 am. Background detailed enough to onboard a new hire.

I was still following those rules when I built my Content Lab (where all my content work lives) and started laying out my LifeOS dashboard (a personal productivity system I was building in Notion). And for a while, it felt like the right call.

When I finally identified the structural problem with my articles, I did the right thing. I started building writing templates. Specific ones for the actual use cases I was working with: experiments I was running, tools I was testing, and prompts I was stress-testing. Each one had its own shape and flow.

And then I kept adding more.

More templates, more use cases, more variations. No core set to anchor them. So the supporting docs (tone, audience, what to stay away from) got muddy. AI couldn’t figure out which flow to follow, so it defaulted to whatever pattern felt closest. The output got inconsistent in a whole new way.

I still catch myself doing this. Starting a new use case and reaching for a new template instead of asking whether one I already have could do the job.

I had graduated from the wrong foundation to the wrong working layer. Different problem, same root cause: building more instead of building better.

The three-layer AI context foundation that fixed it

What finally changed wasn’t the documents themselves. It was the question I was asking about them.

I had been asking “what’s missing from this doc?” every time something went wrong. Which meant every problem sent me back into the same pile of files, adding more to the docs that didn’t need more.

The better question turned out to be: which layer is this problem actually in?

That’s a different question. And it changes where you look.

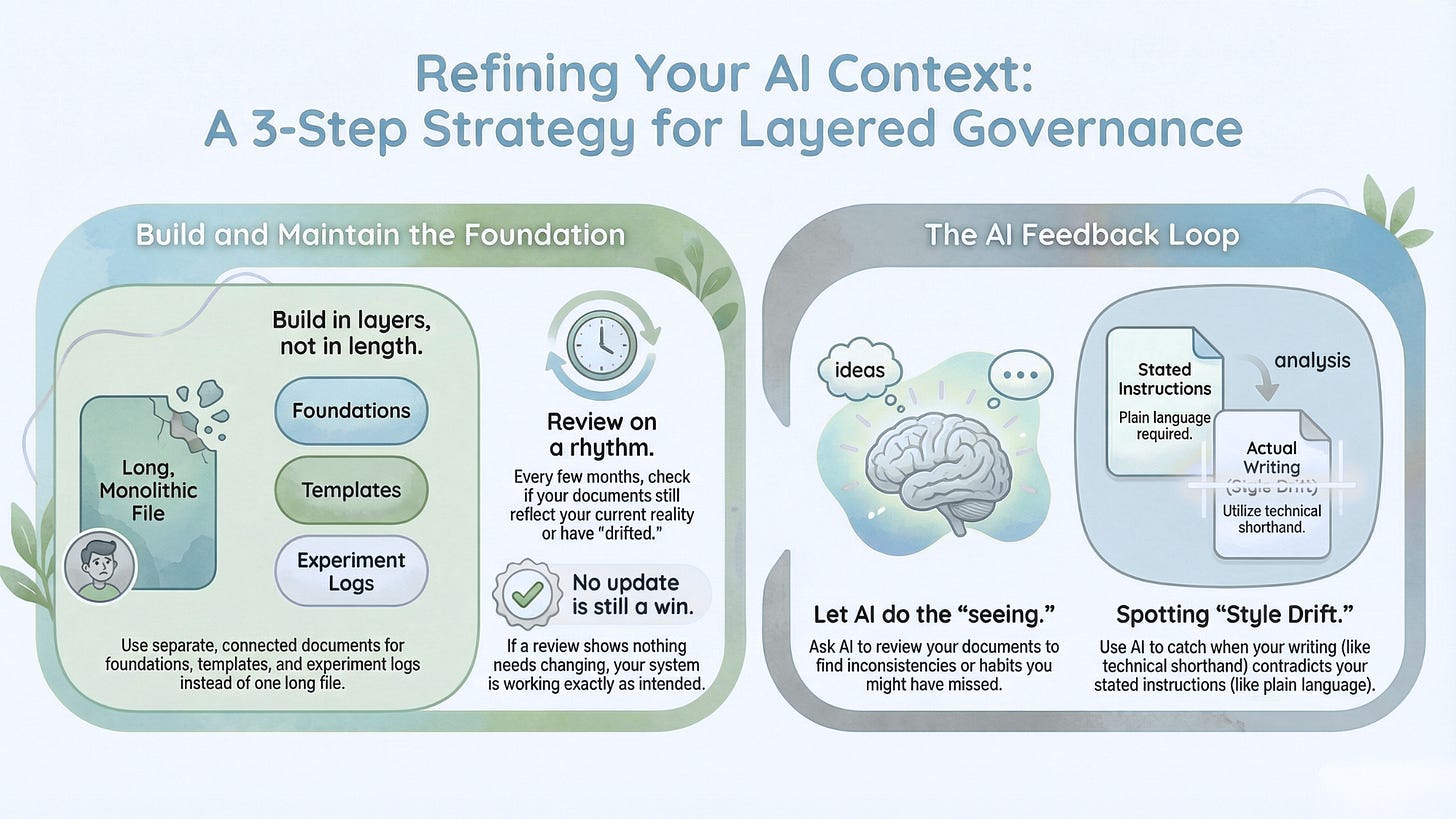

A living context foundation isn’t one long document or even a tight set of core files. It’s built in layers, each one doing a different job:

The foundation is who you are, how you sound, and who you’re talking to. Most people have this, at least partially. It’s table stakes, not the finish line.

The working pieces are how you structure and present information. Templates, offer documents, and the shape of how you think through a problem. This is the layer most people skip entirely. It’s also the one that changed my output quality more than anything in the foundation layer.

Your running record is what you’ve tested, what worked, what didn’t, and what you learned. It’s the layer that lets AI push back rather than just execute. Without it, you have a capable partner with no history of how your business actually works.

The foundation tells AI who you are. The working pieces teach it how you think. The running record gives it something to measure against.

When I stopped patching the foundation and started building the missing layer, the output settled. The articles found their shape. The foundation stopped being something I was constantly fixing and started being something I was building on.

How to audit and update your AI context docs

Three shifts worth making, in order:

1. Build in layers, not in length.

Start with your foundation if you don’t have one. If you do have one, look at what’s next. Do you have a writing template, or a document that captures how you structure information? Do you have anything that tracks what you’ve tried and what you learned?

Each layer lives in its own document. Not one long file that keeps growing. A connected set you can open, update, and put back without touching the rest.

2. Review on a rhythm, update only what drifted.

Every few months, unless something significant changes. Open the relevant document, ask AI to review it against what’s new. Sometimes nothing needs updating — and that’s the system working, not the system failing. You’re not looking for what’s wrong. You’re checking whether it still reflects where you actually are.

3. Let AI do the seeing you can’t.

This one surprised me. When I started adding experiment notes to my context — what I was building, testing, learning — AI flagged something I hadn’t noticed myself. I had been telling my agents to break everything down into plain language, no jargon, keep it accessible. But when I wrote those experiment notes, I was writing like I was back in a developer meeting. Project briefs. Technical shorthand. The language of someone deep inside the work.

AI couldn’t reconcile the instruction with the example. And just like that, I couldn’t pretend I didn’t know. My content had been talking at my audience instead of with them — and no amount of audience profiling would have caught it. The problem wasn’t in the profile. It was in how I was showing up in my own docs.

Here’s a prompt you can use to audit a document you already have:

I'm going to share one of my context documents with you.

Once I share it, tell me:

1. Which layer does this belong in — foundation (who I am, voice, audience),

working pieces (how I structure information, templates, offers), or

running record (what I've tested and learned)?

2. What's in here that still feels current and accurate?

3. What feels like it was written for an older version of my business?

4. What's missing that would help you act as a real partner, not just a generator?

Don't tell me what to fix yet. Just tell me what you see.The companion piece walks through building each layer from scratch — what goes in each document, the prompts for creating them, and what they actually look like.

The hidden cost of outdated context docs

Every session where AI guesses wrong, you’re editing. Every month a doc ages unreviewed, you’re re-explaining. Every output that’s almost right but not quite — that’s not a prompt problem. That’s a foundation problem.

The harder cost to see: you can stay busy tweaking docs and feel like you’re making progress. But if the missing layer never gets built, nothing changes. You’re not stuck because you stopped trying. You’re stuck while trying the wrong thing.

That friction you keep feeling isn’t weakness. It’s pointing at exactly what needs attention.

Key takeaways

If you only take away four things from this, make it these.

The docs aren’t wrong — they’re answering the wrong questions. More context doesn’t necessarily lead to better results. The goal was never to document everything. It was to remove the guesswork. Those aren’t the same job.

You can’t fix a structure problem in a voice document. When output is off, identify which layer the problem is actually in before you touch anything.

A living foundation is built in layers, not in length. The foundation tells AI who you are. The working pieces teach it how you think. Your running record gives it something to measure against. Each one is a different document doing a different job.

AI can surface contradictions you can’t see from inside. When your instructions and your examples don’t match, AI will find it. Add your experiment notes, your test results, your real work — and let it show you what you’re missing.

Start here: find the layer you’re missing

Most people built their context docs once and moved on. Going back to revisit them feels like admitting they weren’t enough.

They were enough. For then. The tools changed, and the job changed with them. That’s not a failure — it’s just where we are.

The shift that’s actually hard isn’t the tactical one. It’s accepting that something you built carefully and deliberately might need a new layer — not because you did it wrong, but because you’ve outgrown what it was designed for.

You’re allowed to find that hard.

What’s one document or template you built a while back that still makes sense on paper — but you’re starting to suspect isn’t working for where you are now? Not a general category. A specific thing. Drop it in the comments and I’ll tell you which layer I’d look at first.

If you want to build the layers from scratch, the companion piece walks through all three — what goes in each document, the prompts to create them, and what done looks like.